Computer security is a topic that frequently emerges when talking with a manufacturing business that is curious about “digital transformation” or “Industry 4.0”.

I find that a lot of SMEs are sceptical about Industry 4.0, in so much that they can’t see a practical way forward to realise the potential benefits. They are just about persuaded that their business transaction databases can be “secure”. But any talk of connecting machinery to a network, so that real-time data can be recorded for processing is a step too far.

Their fears are not unfounded. Any business must protect its operations from the leakage, loss and mis-use of its data. If we start bolting-on sensors to factory plant, and connect these sensors to other systems to enable greater efficiencies, we have increased the number of opportunities for process data to be exposed. And process data is where the intellectual property (IP) of many manufacturers lies.

A lot of effort has been expended in the development of secure communication networks and computer systems, but the information breaches that become public only serve to reinforce the security fears of SMEs.

When your competitive edge is defined by your process IP, you will be especially motivated to protect it.

A machine operator discussing their work over a beer at the local bar might reveal some information that gives a clue as to what the business does differently. But this is still relatively benign next to the situation where you have access to all of the data that is being generated by a factory.

You can do a lot with data, and it becomes easier to recreate a scenario the more data you possess. So, if you can gain access to that data, you can act in a more informed way.

However, Industry 4.0 is not just about collecting data. It is also about predicting the future using in-process forecasting; developing enhanced methods of visualising complex data to aid comprehension; using computational resources to automate and delegate physical actuation; and also to create models of the future that can be reasoned with to improve coordination, scheduling and resource utilisation.

So, what do manufacturers need?

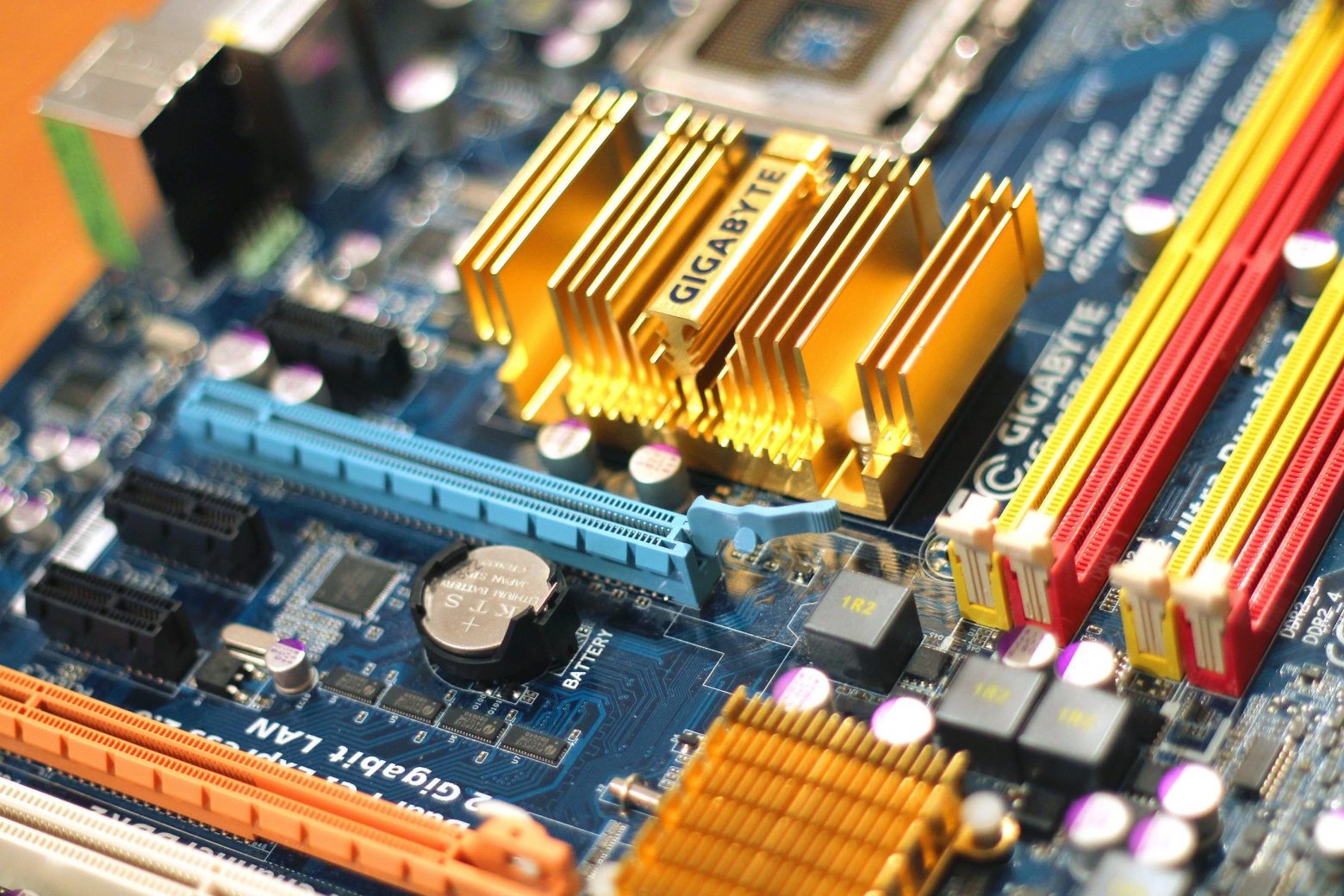

Common installations of Industrial Digital Technology require sensors of various types, embedded systems to process the data that is captured and associated networking infrastructure that can transport the data to a centralised data storage/processing facility that is typically a cloud, that may reside off the premises.

Making better use of the computational resources at the endpoints of networks has given rise to “Edge Computing” where more of the data processing is “pushed” towards the devices at the edge of a network. This has become possible since hardware is continuously becoming more capable and less costly to deploy.

Edge Computing does have much to offer manufacturers, particularly with respect to the processing of process data much closer to the process operation itself. However, while some processing can occur, more significant processing still requires more capable hardware, and if that hardware is provided via a cloud, there will be a requirement to have an encrypted connection to that cloud so that the analysis can be done.

Since remote cloud resources are a source of security fears for manufacturers there is a need to provide the capability to perform Industry 4.0 analytics and visualisation within the confines of an organisation’s firewall.

How can the research community respond to this need?

Microservices Architecture is one approach to the development of agile and robust software systems that may be suited to Edge Computing environments. As we place greater demands upon our systems, and ask new functions of systems that were not part of the original requirements specifications, there is a need to enable systems that can scale elastically; much like clouds do for utility computing.

Such architectures may also support the development of capabilities that engender trust between smart objects. Manufacturing systems contain many physical objects, some of which interact with each other. If we want to automate the logistics of objects within a manufacturing value-chain, we shall need to ensure that there are workable trust mechanisms so that the correct interactions can take place.

The certificate authority model of trust cannot scale for a world of smart objects, and this is s driver for research into multi-party authentication schemes, as well as distributed ledger approaches to data and identity provenance.

Once we can confidently deliver insightful analytics and automation within a manufacturer’s firewall, I’m sure that the uptake of IDT will accelerate.